All without changing any code just a configuration file.Īt some point in your journey you will get to a point where Keras starts limiting what you are able to do. Then when you are ready for production you can swap out the backend for TensorFlow and have it serving predictions on a Linux server. If using Keras directly you can use PlaidML backend on MacOS with GPU support while developing and creating your ML model. This kind of backend agnostic framework is great for developers. Although one of my favorite libraries PlaidML have built their own support for Keras. Using Keras you can swap out the “backend” between many frameworks in eluding TensorFlow, Theano, or CNTK officially. Keras is called a “front-end” api for machine learning. TensorFlow is even replacing their high level API with Keras come TensorFlow version 2. Keras is a favorite tool among many in Machine Learning. Implementing Swish Activation Function in Keras "Swish as Neural Networks Activation Function". 2008 IEEE International Conference on Acoustics, Speech and Signal Processing: 3265–3268. "Smooth sigmoid wavelet shrinkage for non-parametric estimation". Pastor, Dominique Mercier, Gregoire (March 2008). "Mish: A Self Regularized Non-Monotonic Neural Activation Function". "Sigmoid-Weighted Linear Units for Neural Network Function Approximation in Reinforcement Learning". ^ Elfwing, Stefan Uchibe, Eiji Doya, Kenji ().^ Hendrycks, Dan Gimpel, Kevin (2016).^ a b c d e Ramachandran, Prajit Zoph, Barret Le, Quoc V.It is believed that one reason for the improvement is that the swish function helps alleviate the vanishing gradient problem during backpropagation. In 2017, after performing analysis on ImageNet data, researchers from Google indicated that using this function as an activation function in artificial neural networks improves the performance, compared to ReLU and sigmoid functions. When considering positive values, Swish is a particular case of sigmoid shrinkage function defined in (see the doubly parameterized sigmoid shrinkage form given by Equation (3) of this reference).

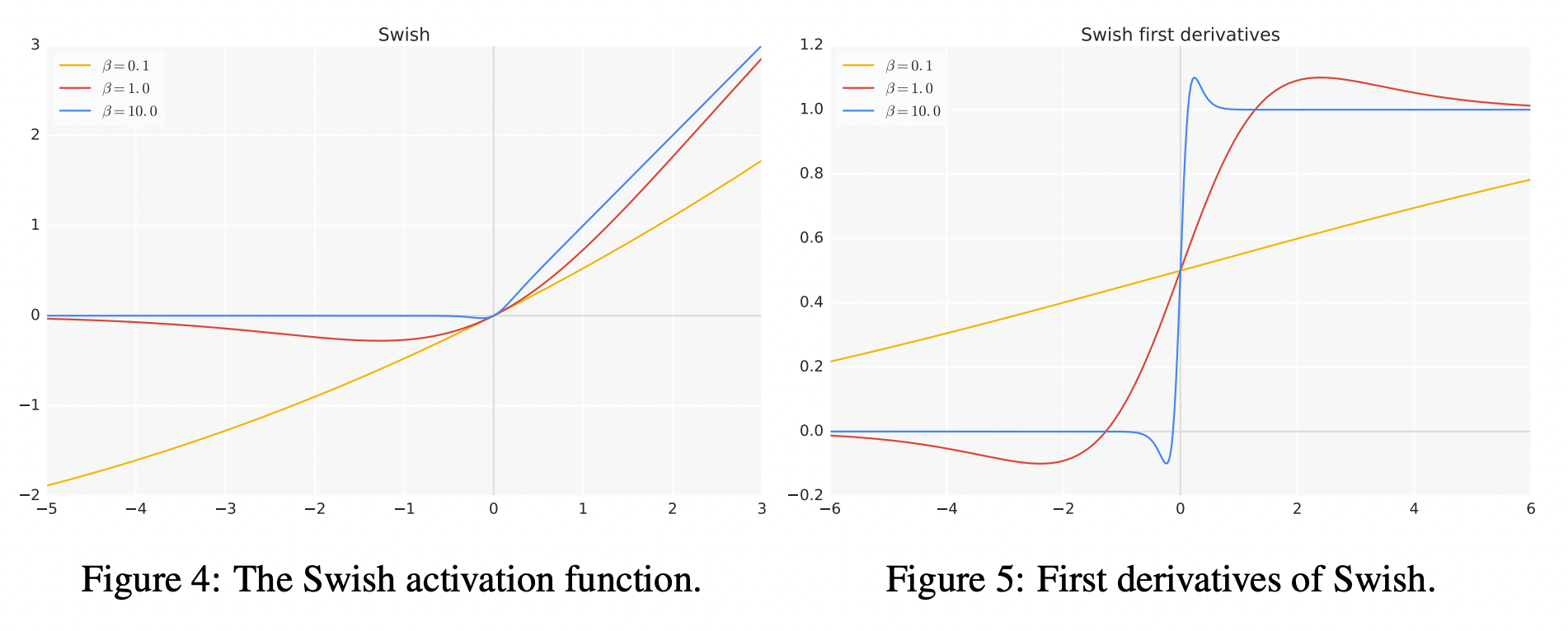

This function uses non-monotonicity, and may have influenced the proposal of other activation functions with this property such as Mish. Thus, it can be viewed as a smoothing function which nonlinearly interpolates between a linear function and the ReLU function. With β → ∞, the sigmoid component approaches a 0-1 function pointwise, so swish approaches the ReLU function pointwise. For β = 0, the function turns into the scaled linear function f( x) = x/2. The swish paper was then updated to propose the activation with the learnable parameter β, though researchers usually let β = 1 and do not use the learnable parameter β. The SiLU/SiL was then rediscovered as the swish over a year after its initial discovery, originally proposed without the learnable parameter β, so that β implicitly equalled 1. The SiLU was later rediscovered in 2017 as the Sigmoid-weighted Linear Unit (SiL) function used in reinforcement learning. For β = 1, the function becomes equivalent to the Sigmoid Linear Unit or SiLU, first proposed alongside the GELU in 2016. Where β is either constant or a trainable parameter depending on the model. The swish function swish ( x ) = x sigmoid ( β x ) = x 1 + e − β x.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed